Haptics and Eye Tracking

The idea of this project is to utilize haptic channels to assist navigational tasks in scenarios where the visual and auditory sensing fall short. Such scenarios are often seen in emergencies, like a building on fire where smoke may have covered most of the sight. It also occurs in daily lives where too much information is present, such as trying to navigate a big airport with ill-placed signs, or finding a specific product in a supermarket.

My colleague (cf. the picture below) implemented this hatpic navigation system on a bHaptics X40 TactSuit with 40 vibrotactile motors distributed across its front and back. The vest periodically provides vibrotactile feedback to users, indicating the direction of the target's position.

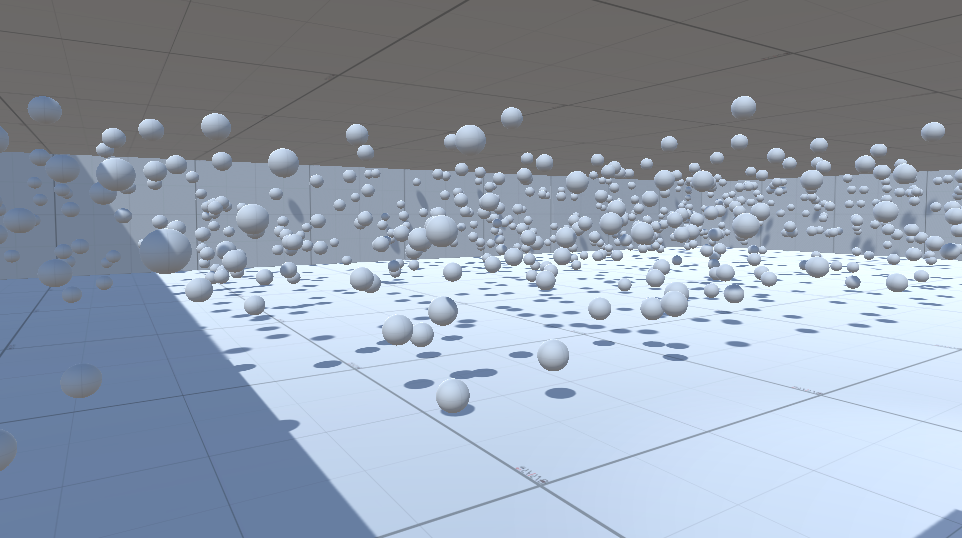

To test the effectiveness of the system, we simulated several scenarios in virtual reality, where users have to navigate through the virtual environment with minimal visual and auditory cues, as depicted in the pictures below.

Floating Orbs: Find a target sphere out of hundreds of spheres of the same appearance [1].

Fog Labyrinth: Navigate through a maze under the influence of thick smoke [1].

Most of the users who tested the system were able to finish these tasks, regardless of the demographics.

Eye-tracking data was also recorded during the user test, which revealed correlations between saccadic behaviors, user states, and navigation performance. For detailed analysis of the eyetracking data, please refer to our publication at the 2026 IEEE VR and 3D User Interface (Advanced Haptic Directional Awareness in Virtual Reality).

Associated Publications

- Botev, J., Dias da Silva, E., & Sun N. (2026). Advanced Haptic Directional Awareness in Virtual Reality. In Proceedings of the 2026 IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR). [link]

- Botev, J., Dias da Silva, E., & Sun, N. (2024). Haptic Directional Awareness in Virtual Reality. In 21st EuroXR International Conference (EuroXR). [link]